Research

Research Vision

I build reliable perception for autonomous systems. My work develops methods that make vision models robust to real-world shift, quantify uncertainty for safer decisions, and reduce performance gaps across conditions—so robots can know when to trust what they see, when to adapt, and when to be cautious. I focus on practical evaluation and deployment constraints, prioritizing techniques that improve reliability without heavy retraining or brittle assumptions.

Research Themes

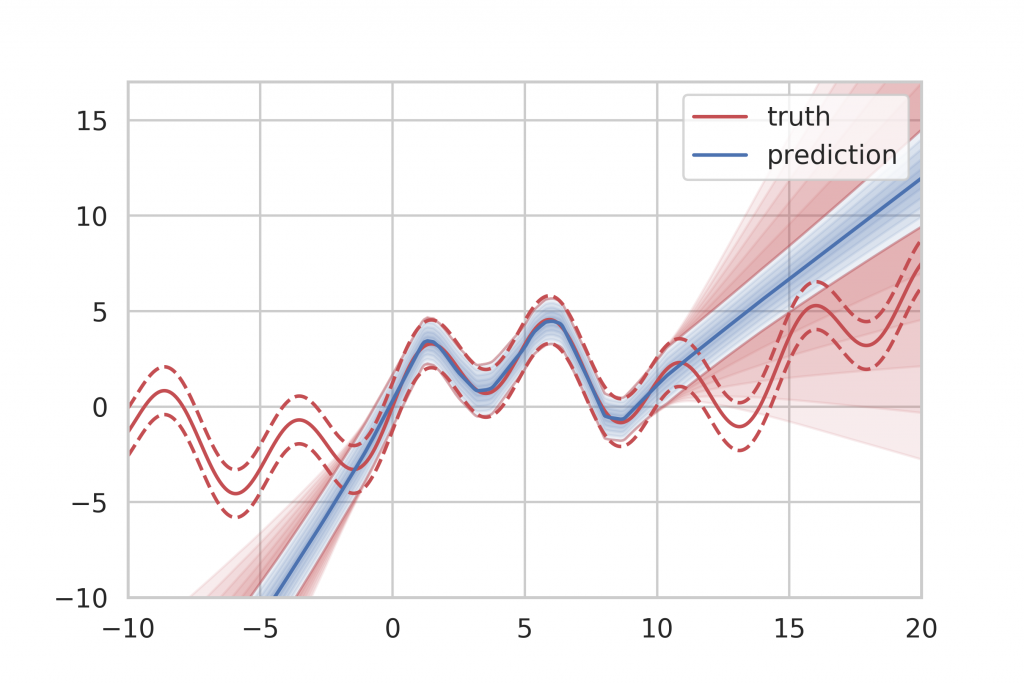

Uncertainty-Aware Perception

Real-world sensing is uncertain. I design methods that quantify predictive uncertainty so robots can assess reliability, detect failure modes, and act more safely when perception is ambiguous.

Domain Adaptation & Generalization

Robots encounter conditions that differ from training. I study shift detection and robustness techniques that help models remain stable across environments, sensors, weather, and viewpoints.

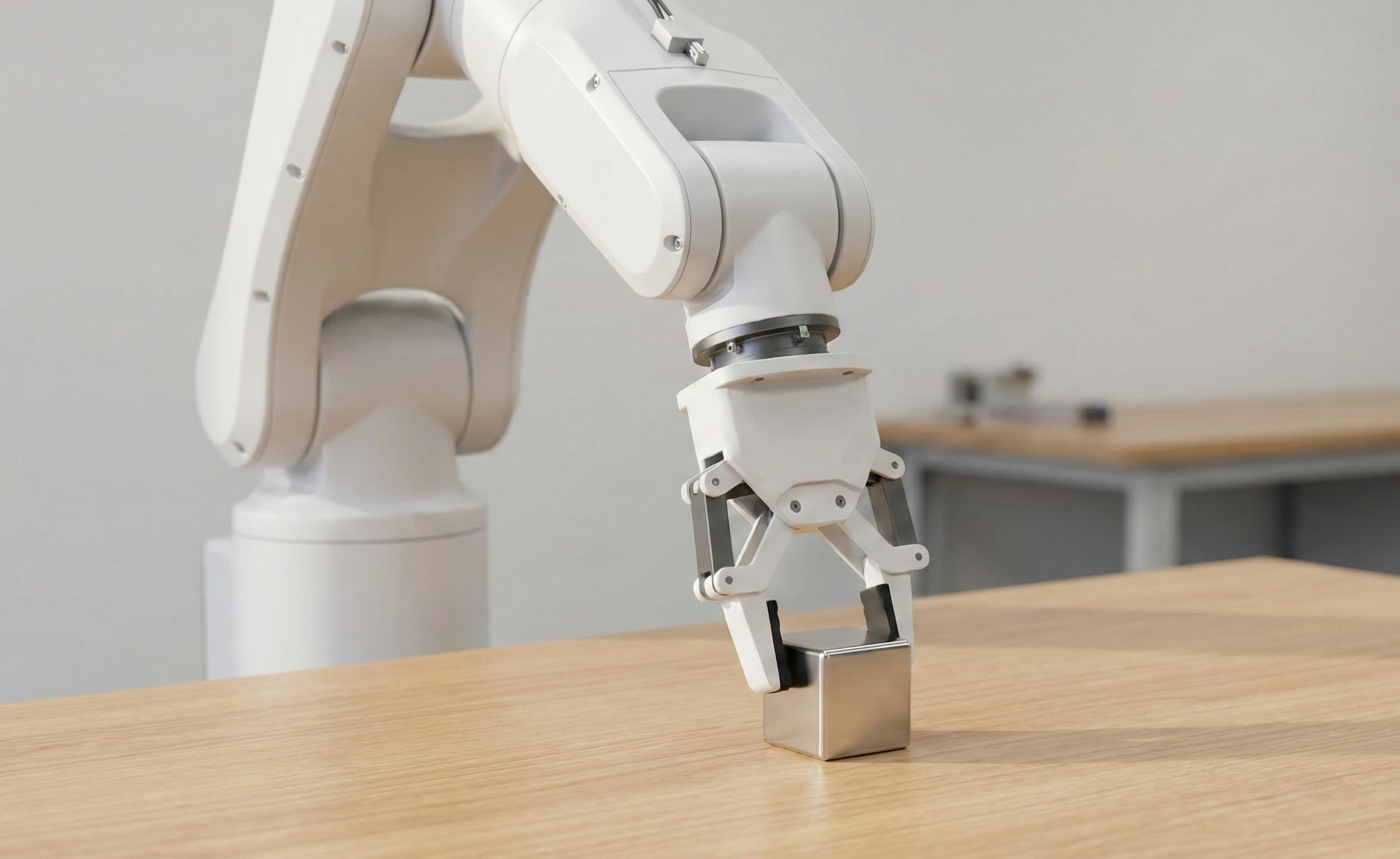

Physical AI & Continual Adaptation

Autonomous systems operate in changing physical environments. I study how perception models adapt after deployment through continual learning and data-efficient updates, enabling robots to remain reliable over time.